Research Design

Research design refers to the planned, systematic, and structured approach employed to investigate research questions and achieve accurate results. A plan refers to the comprehensive framework or program of the investigation. It includes a comprehensive plan outlining the investigator’s actions, starting with formulating hypotheses and their practical consequences, and their definitive implication of data analysis.

A research design refers to the systematic organization of circumstances that are used to collect and analyze data in a way that effectively combines relevance to the study objective with efficiency in the process.

- Vimal Shah: Research design is the plan of study, whether controlled as well as uncontrolled and subjective as well as objective.

- Kerlinger: Research design refers to the systematic plan, structure, and technique employed to investigate research problems and control variability.

Characteristics of Research Design

For a research design to be considered sound and scientifically credible, it must possess a defined set of characteristics that collectively determine the quality, trustworthiness, and replicability of the research.

Validity

Validity refers to the degree to which a research design and its instruments accurately measure what they are intended to measure. An effective study design allows the appropriate selection of measuring instruments to assess outcomes in accordance with the research purpose.

Validity is broadly classified into content validity (coverage of all relevant dimensions), construct validity (accurate representation of the theoretical concept), internal validity (outcomes genuinely caused by the variables under study), and external validity (applicability of findings beyond the study context).

Generalizability

An effective study design yields results that may be extended to a broader population, without being unduly constrained by sample size or the characteristics of the specific research group. Generalizability depends on the representativeness of the sample, the adequacy of sample size, and the minimization of selection bias. It is achieved through appropriate probability sampling techniques such as random or stratified sampling.

Neutrality

Prior to commencing a research project, a researcher must establish assumptions that will be subjected to testing. An appropriate study design guarantees that these assumptions are free from bias and neutral, and that the data gathered is derived from the initial assumptions stated at the start. Standardized data collection tools, double-blind processes, and pre-registration of hypotheses help keep things neutral so that research questions don’t get changed after the fact.

Objectivity

Objectivity ensures that study results are not influenced by the personal opinions of an individual researcher, allowing for multiple individuals to agree on the final findings or conclusions. This is supported by transparent and systematic data gathering and analysis methods, well specified operationalization, and peer review, ensuring that independent researchers examining the same data reach similar results.

Reliability

Reliability refers to the consistency of measurements across multiple repeated studies while minimizing random mistakes. An effective study design should produce reliable results with minimal likelihood of chance-related mistakes. The idea of dependability is frequently assessed by test-retest reliability, inter-rater reliability, or internal consistency, which is measured by Cronbach’s Alpha; a value of 0.70 or higher is generally considered satisfactory.

Ethical Integrity

A strong research design is inherently linked to the principle of ethical integrity. A rigorous design must ensure the ethical treatment of human subjects, including informed consent, confidentiality, protection from harm, and the ability to withdraw. Results must be disseminated honestly and without bias, while adhering to institutional ethical standards throughout the study process.

Feasibility

The capacity to carry out the study within the constraints of time, money, human resources, and study population accessibility is known as feasibility. To ensure that the research study is practicable and that its scientific integrity is not compromised, a successful research design maintains a balance between rigorous methodologies and practical implementation.

Flexibility

Flexibility is the ability of a research design to respond to unanticipated changes without undermining the integrity of the research design. Some inherent flexibility gives the researcher a flexibility in responding to sampling challenges, instrument ambiguities discovered in piloting, or the availability of the research population.

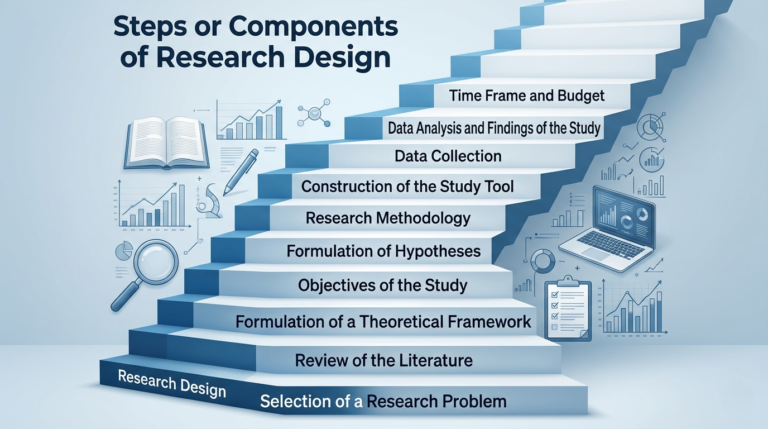

Steps or Components of Research Design

Research design is an iterative process comprising several components. A coherent sequence of the methodological approach to a research issue is established by constructing each component in a logical progression from the preceding one to the presentation of findings.

Step 1: Selection of a Research Problem

The selection of a research problem constitutes the foundational stage upon which the whole study design is predicated. The researcher must identify a topic of genuine academic or practical significance, clearly describe it, and clarify the study’s objective.

The selected issue must be investigable, appropriately analyzed, and aim to address a genuine deficiency in existing knowledge. A well-defined research problem will guide the subsequent procedure and maintain the focus of the investigation.

Step 2: Review of the Literature

Literature review is a methodical analysis of available academic literature on the chosen research problem, in terms of journal articles, books, theses and valid reports. It also places the present study in the context of the previous knowledge, reveals the gaps in the previous research, avoids repetitions, and guides the development of questions, hypotheses, and the theoretical framework. A critical synthesis, rather than a summary of previous studies is a core attribute of the literature review.

Step 3: Formulation of a Theoretical Framework

The theoretical framework offers the interpretive perspective for data analysis by recognizing existing theories or conceptual models that describe the phenomena under investigation. It offers a rationale for the primary variables of the study and the expected relationships among them. For example, based on the focus of the inquiry, a study on marital patterns can use Modernization Theory or Social Exchange Theory.

Step 4: Objectives of the Study

Objectives are precise, actionable statements that define what the researcher aims to achieve. They translate the broad research problem into specific, measurable, and attainable goals, typically expressed using action verbs such as ‘to identify,’ ‘to examine,’ or ‘to assess.’ Each objective must correspond to a specific aspect of the research problem, guide the construction of data collection instruments, and remain logically consistent with the hypotheses.

Step 5: Formulation of Hypotheses

A hypothesis is a tentative, testable statement predicting the expected relationship between two or more variables, derived from the literature review and theoretical framework. For each research hypothesis, a corresponding null hypothesis stating that no significant relationship exists must also be formulated, as it is the null hypothesis that is statistically tested. Hypotheses must be falsifiable, and the significance level for testing (conventionally p ≤ 0.05) should be specified in advance.

Step 6: Research Methodology

Research methodology encompasses the overall strategy adopted to conduct the study. It comprises three interrelated sub-components:

- Method: The research method refers to the overall approach quantitative, qualitative, or mixed-method and the specific design adopted, such as descriptive, correlational, or cross-sectional. The method must be appropriate to the research objectives and hypotheses.

- Tools of Data Collection: Data collection tools are the instruments used to gather information from respondents, such as questionnaires, interview schedules, or observation checklists. The choice of tool must be justified with reference to the research method and the nature of the study population, and each tool must be tested for validity and reliability prior to full-scale deployment.

- Sampling: Sampling involves selecting a subset of the target population from whom data will be collected. The sampling technique probability-based or non-probability must be explicitly stated and justified. Concrete inclusion and exclusion criteria for participants must be defined, and the sample size must be sufficient for statistically meaningful results.

Step 7: Construction of the Study Tool

This step transforms the study’s conceptual components into concrete, measurable data collection components. The study’s objectives inform the tool’s creation, which is grounded in themes from the literature review. Alongside employing clear wording and an appropriate response format, such as a Likert scale, the questionnaire must be effectively segmented into sections.

A pilot study involving 10–30 respondents must be conducted prior to widespread deployment, and Cronbach’s Alpha should be employed to assess reliability.

Step 8: Data Collection

The systematic procedure of acquiring empirical data from the selected sample utilizing the authorized research instrument is referred to as data collecting. It requires careful logistical organization, encompassing the method of administration, scheduling of fieldwork, and strict compliance with ethical requirements like as respondent anonymity and informed consent.

Step 9: Data Analysis and Findings of the Study

In order to evaluate hypotheses and accomplish goals, data analysis entails using statistical or thematic methods to the gathered data. When carried out at a predefined significance level, quantitative research includes both descriptive statistics and inferential tests like regression analysis, correlation, chi-square, and t-test.

By placing the results in the context of the theoretical framework and recent literature, interpretation assesses the validity of assumptions in addition to statistical reporting. Findings are specific empirical conclusions drawn from data analysis. Results about the study’s objectives and theoretical framework are included in the conclusions.

For relevant stakeholders or future research projects, recommendations offer practical or policy-focused suggestions. In order for readers to appropriately contextualize the results, this step must acknowledge the constraints of the study, such as sample size, sampling procedure, or cross-sectional design.

Step 10: Time Frame and Budget

Each study design must have a budget and timeline that are feasible. The time frame is typically represented as a periodic timetable and delineates the timeframe allocated to each stage of the research process.

The budget outlines the expected financial resources required for printing, transportation, data analysis software, and supplementary expenses. The study’s feasibility is clearly established and effective resource management is facilitated by the articulation of these factors.

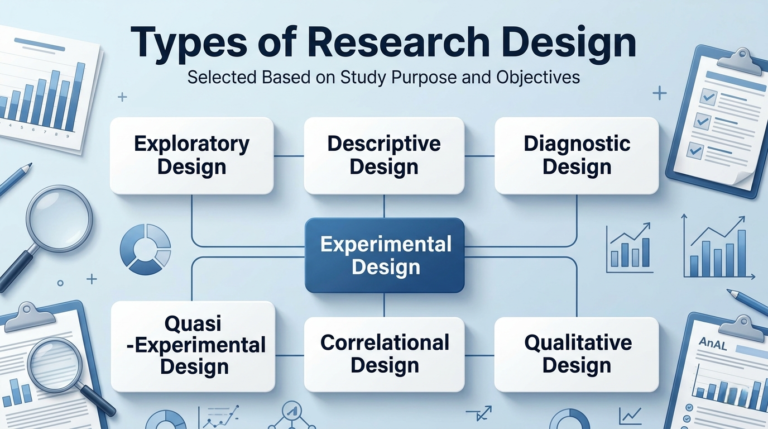

Types of Research Design

Research design is the strategic framework that guides a researcher throughout a study. It is the overall plan that specifies how data will be collected, from whom, at what time, and how it will be analyzed to answer the research questions. Different types of research design are adopted depending on the purpose of the study, the nature of the problem, and the objectives of the research.

According to Purpose

The following four designs reflect the broad goal or intention behind undertaking a study.

Exploratory Research Design

In exploratory inquiry the researcher uses flexible and unstructured methods — such as literature reviews, focus groups, and expert interviews to obtain preliminary information about a topic. Exploratory research is appropriate when a problem is not yet clearly defined and the researcher needs to gain familiarity with the subject before committing to a formal study design.

In this design, the development of a prior hypothesis is not required. Instead, the research explores concepts and undiscovered aspects of a subject to answer questions like what, how, and why. Common data-collection methods include secondary data analysis, unstructured interviews, pilot surveys, and observation. Results from exploratory studies are typically qualitative and tentative, forming the basis for more structured follow-up research.

Descriptive Research Design

In descriptive research the researcher is interested in describing a situation or phenomenon as it actually exists. This design answers the questions what, who, when, and where but does NOT establish cause and effect.

Data are collected using techniques such as structured observation, surveys, and census studies. Descriptive research describes characteristics of a population or phenomenon but does not manipulate variables or establish causal relationships. Typical examples include population censuses, market-research surveys, and prevalence studies.

Diagnostic Research Design

Diagnostic research design explores the reason behind an issue and seeks solutions to resolve it. It is most commonly used in applied, organizational, and clinical settings where a problem exists and practical remedies are required.

The design proceeds through four structured phases:

- Identification and definition of the problem (the researcher identifies and clearly defines the issue)

- Diagnosis of the causes of the problem

- Formulation of remedial measures

- Implementation and evaluation of the solution

Data are collected through interviews, observations, document analysis, and formal and informal testing.

According to Time Dimension

Cross-Sectional Design

A cross-sectional study collects data from many different individuals at a single point in time. It is used to determine the prevalence of a phenomenon, situation, problem, attitude, or issue. This design is sometimes called a ‘snapshot’ study. It is relatively quick, inexpensive, and simple to administer.

The primary limitation is that it cannot measure change over time. Additional limitations include: inability to establish temporal sequence (necessary for causal inference), susceptibility to cohort effects, and potential confounding by variables not measured at the single time-point. Example: measuring the current prevalence of hypertension in a community.

Longitudinal Design

In longitudinal studies the same population is observed multiple times at regular intervals over an extended period. Intervals may range from a week to longer than a year; the key feature is that the SAME individuals or units are measured repeatedly, allowing the researcher to track change over time.

Longitudinal designs fall into three sub-types: (a) Panel studies; the same individuals are tracked over time; (b) Cohort studies; groups sharing a defining characteristic (e.g. birth year) are followed; (c) Trend studies; different samples from the same population are drawn at each time point. Example: tracking English-language proficiency in a specific community over five years.

According to Reference Period

Retrospective Design

This design investigates a phenomenon, situation, or problem that has already occurred in the past. Studies are conducted on the basis of existing data records or on respondents’ recall of past events. A key limitation is recall bias participants may not accurately remember past events, reducing data reliability.

Retrospective designs are common in epidemiology (e.g., case-control studies) and historical social research. Example: problems faced by migrants after the creation of Pakistan.

Prospective Design

In prospective research, individuals or units are followed forward in time from a clearly defined starting point, and data are collected as their characteristics or circumstances change. This design is stronger than retrospective designs for establishing temporal relationships because the researcher records outcomes before they are known, reducing bias.

Example: Following a cohort of students through their educational careers to assess the effects of literature exposure on their belief systems.

Retrospective-Prospective (Before-and-After) Design

This design combines both orientations: it constructs a baseline from past data (retrospective) and then tracks outcomes going forward (prospective). It is also called an interrupted time-series design or pre-post design. When conducted without a control group, it is classified as a quasi-experimental design because it lacks random assignment.

In practice, most before-and-after studies without a control group where the baseline is drawn from the same population before the intervention fall into this category. Example: evaluating the effect of a road-safety awareness campaign by comparing accident rates before and after implementation.

According to Nature of Investigation

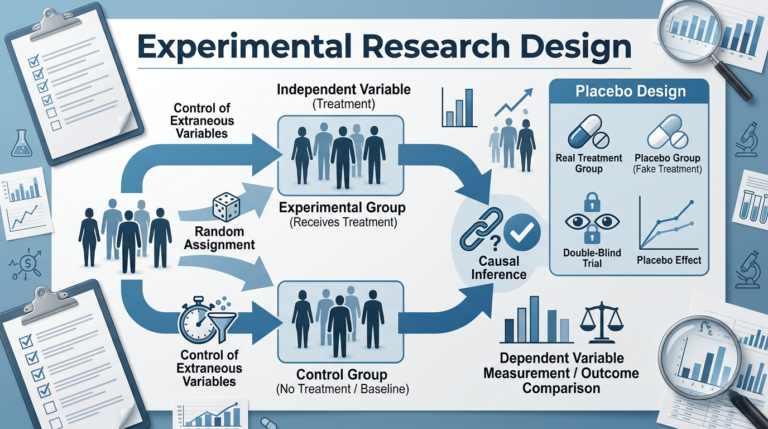

Experimental Research Design

In experimental research the investigator deliberately manipulates one or more independent variables and observes the resulting effect on the dependent variable, while controlling for extraneous factors. In a true experiment, participants are randomly assigned to groups this random assignment is the defining feature that distinguishes a true experiment from a quasi-experiment and is what allows causal inference.

The experimental group receives the treatment (the application of the independent variable), while the control group serves as the baseline and does not receive the treatment. The dependent variable is measured in both groups to determine the causal effect of the treatment.

Experimental designs in social science face unique ethical constraints: human participants cannot be subjected to all manipulations permissible in natural sciences. This is why quasi-experimental and non-experimental designs are often more prevalent in social research.

Placebo Design

A placebo design is a research method used to test how effective a treatment or program really is by comparing it with a placebo, which is an inactive or fake treatment like a sugar pill. In such studies, participants are divided into two groups: one receives the actual treatment, while the other receives the placebo.

This helps researchers see whether improvements are due to the treatment itself or simply because participants believe they are being treated. In a double-blind placebo trial, neither the participants nor the researchers know who is receiving the real treatment or the placebo, which removes bias from both sides and makes the results more reliable.

If people in the placebo group also show improvement, it is called the placebo effect, meaning their condition improved due to psychological factors rather than the treatment itself.

Non-Experimental Research Design

In non-experimental research there is no manipulation of an independent variable. The researcher observes phenomena as they naturally occur and draws conclusions from those observations. Non-experimental research includes surveys, observational studies, correlational studies, secondary data analysis, and content analysis. It is widely used in social science, education, and public health where experimental manipulation would be unethical or impractical.

Quasi-Experimental Research Design

Quasi-experimental studies share properties of both experimental and non-experimental designs. Part of the study is conducted under controlled conditions, but the defining feature is the ABSENCE of random assignment to groups, even though a treatment or intervention is still applied. Part of the study is conducted in a natural, non-experimental setting.

Common quasi-experimental designs include: (a) the non-equivalent control group design; (b) the interrupted time-series design; and (c) the regression discontinuity design. These designs allow causal inference to varying degrees but with greater risk of confounding than true experiments.

Correlational Research Design

Correlational research identifies and quantifies the statistical association between two or more variables. The researcher observes variables either at a single point in time (cross-sectional) or over time (longitudinal) and measures how strongly they co-vary.

Crucially, a correlation between two variables does NOT mean that one causes the other. Correlation only describes the degree to which variables move together (co-vary). Establishing causation requires experimental manipulation with random assignment, temporal precedence, and the elimination of plausible alternative explanations.

This design does not require two separate groups; it measures relationships within the same sample. Correlation coefficients range from −1.0 (perfect negative relationship) through 0 (no linear relationship) to +1.0 (perfect positive relationship).

Qualitative Research Designs

Case Study

A case study is an intensive investigation of a person, group, organization, event, or phenomenon, aimed at generating an in-depth, multi-faceted understanding of a complex issue in its real-life context. According to Yin (2014), case studies are appropriate for examining the “how” and “why” of contemporary events that are beyond the researcher’s control.

Case studies may be exploratory, descriptive, or explanatory, and they may be singular (one unit) or multiple/comparative (many units). This design is extensively used in social sciences, education, management, and health research and is well-established.

Holistic Research

Examining a phenomena as a single unit as opposed to breaking it down into smaller parts is referred to the holistic inquiry. It highlights the complex relationships between many elements, acknowledging that they are not always understandable through straightforward cause-and-effect correlations.

Phenomenology and systems theory, which highlight understanding of interconnected systems and experiences, serve as the foundation for this study. It is widely used in fields including organizational analysis, health research, and indigenous studies.

The underlying premise is that a scenario is impacted by a variety of elements; as a result, researchers must examine the situation as a whole rather than just individual variables in order to have a thorough knowledge of it.

Oral History

As a research approach, oral history involves recording discussions between an interviewer and a narrator who has personal knowledge of historically relevant events. Enhancing the historical record is the aim of oral history. The focus on the preservation of individual stories and historical records sets oral history apart from traditional interviews.

When it comes to ethical matters, informed consent and participant identity protection are crucial. Generally speaking, recordings are kept for future use by the general public and other academics.

Reflective Journal / Research Log

Throughout a study, a researcher can capture their thoughts, observations, and interpretations in real time by keeping a reflective journal. It is closely related to reflexivity, which means that the researcher evaluates critically how their background, beliefs, and social standing may affect the study and its findings.

In this journal, the researcher records the activities, thoughts, and feelings during the process of gathering and analyzing data. It makes possible to provide a complete record of the research procedure, which raises the study’s credibility. The journal could also be an important source of information for approaches like autoethnography.

Focus Group Discussion (FGD)

Focus group discussions are structured interviews that are conducted with a deliberately selected group of participants with the objective of collecting a variety of perspectives on a specific topic. It is recommended that the group size be between 6 and 10, with 6 and 8 being the most effective for maintaining manageability and nurturing substantial conversation.

Typically, theme saturation necessitates three to six focus group discussion sessions. Focus groups are highly beneficial for the analysis of attitudes, perceptions, and motives, as well as for the understanding of how individuals collectively construct meaning.

A professional moderator oversees the debate with a topic guide, while a co-facilitator documents field notes. A critical data source is the interaction among participants, rather than merely individual responses.

In-Depth Interview (IDI)

An in-depth interview (IDI) is a one-on-one data collection method that allows the interviewer to obtain detailed, nuanced information from a single participant. IDIs are semi-structured or unstructured in nature. Unlike structured interviews (which follow a fixed questionnaire), IDIs use an interview guide with open-ended questions that the interviewer can adapt, probe, and follow as the conversation evolves.

This flexibility enables the researcher to look into important but unanticipated problems. Researchers typically communicate with respondents via phone conversations, online platforms, or face-to-face meetings. When discussing sensitive topics, complicated personal experiences, and circumstances where individual variances in experience are expected and appreciated, in-depth interviews (IDIs) are especially appropriate.

Observation

Observation is the systematic tracking and recording of actions, events, or phenomena in their natural setting. It usually occurs in the ethnographical studies. This technique can be carried out from a distance (non-participant observation) or with active participation (participant observation).

There are two types of observation; structured, which uses a set timetable to record particular behaviors, and unstructured, which records all pertinent behaviors as they happen. Participant observation, which entails the researcher engaging themselves in the social environment for a long period of time in order to obtain an insider viewpoint, is the fundamental approach of anthropological study.

Maintaining a balance between the researcher’s dual responsibilities as participant and observer while avoiding reactivity a phrase used to describe behavioral changes that result from being aware of being observed is the fundamental methodological issue.

Advantages of Research Design

Research design offers a multitude of advantages that contribute to the overall quality, credibility, and utility of a study. A carefully constructed research design is the very foundation upon which the integrity of the entire investigation rests.

Clear Direction and Focus

Research design offers guidance and clear direction to the research process, aiding in the selection of well-defined objectives and enabling the researcher to concentrate on specific research questions or hypotheses. Without a clearly articulated design, research risks becoming unfocused, leading to the collection of irrelevant data and conclusions that do not meaningfully address the original problem.

Control over Variables

A well-structured study design enables researchers to control variables, recognize confounding factors, and employ randomization to reduce bias and enhance reliability. Even in non-experimental designs, control is achieved through careful sampling, standardized instruments, and statistical techniques such as regression analysis, which allow the researcher to isolate the effect of key variables.

Replication and Verification

Research designs allow for the replication of studies, helping to confirm results and assure that findings are not attributable to chance. A well-chosen design minimizes bias and inaccuracies. Detailed documentation of sampling procedures, instruments, and analysis techniques is essential to enable meaningful replication by independent researchers.

Validity Assurance

A study design assures the validity of the investigation that is, whether results genuinely represent the phenomena being researched. By addressing both internal and external validity, selecting appropriate instruments, and controlling for confounding factors, a sound design ensures that conclusions drawn are meaningful, accurate, and trustworthy.

Reliability and Consistency

Research design reduces random errors and assures consistency of research outcomes across time, samples, and situations. Reliability is built into the design through standardized, pre-tested instruments, clear operational definitions, well-trained data collectors, and systematic data verification procedures.

Efficiency in Resource Utilization

An effective research design enhances efficiency by enabling researchers to select the most suitable approach and optimize data use. By specifying the required data and methods in advance, research design saves time and resources. It eliminates redundant data collection and reduces the likelihood of costly methodological errors that would require the study to be repeated.

Minimization of Bias

A rigorously planned research design serves as the most effective safeguard against bias, which may arise at multiple stages in participant selection (sampling bias), instrument design (instrument bias), data collection (interviewer bias), or reporting (confirmation bias). Probability sampling, anonymous administration, and pre-specified analytical procedures are key mechanisms for detecting and minimizing bias.

Ethical Safeguarding of Participants

A properly constructed research design ensures that the rights, welfare, and dignity of research participants are protected throughout the study. By building ethical protocols into the design from the outset informed consent, confidentiality, and the right to withdraw, it demonstrates institutional accountability and enhances the credibility and acceptability of the research findings.

Contribution to the Advancement of Knowledge

A well-executed research design generates new, reliable, and valid knowledge that advances understanding in a given field. Such findings can inform theory development, challenge prevailing assumptions, guide policy formulation, and serve as the foundation for future research, making the research design the very means by which scholarship progresses.